As AI adoption accelerates across industries, security has become one of the most critical discussions among technology leaders. During a recent CTO Community event, cybersecurity expert Tavleen Oberoi shared deep insights into the OWASP Top 10 risks for Large Language Models (LLMs) and the defensive strategies organizations must adopt.

The session explored both the technical architecture of AI systems and the security vulnerabilities emerging with modern LLM-based applications.

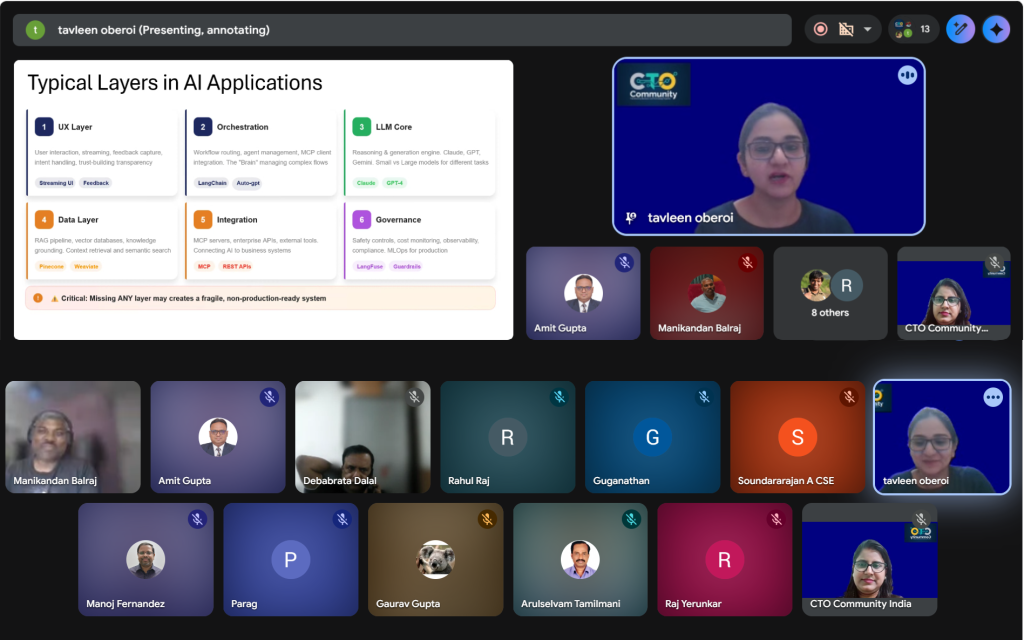

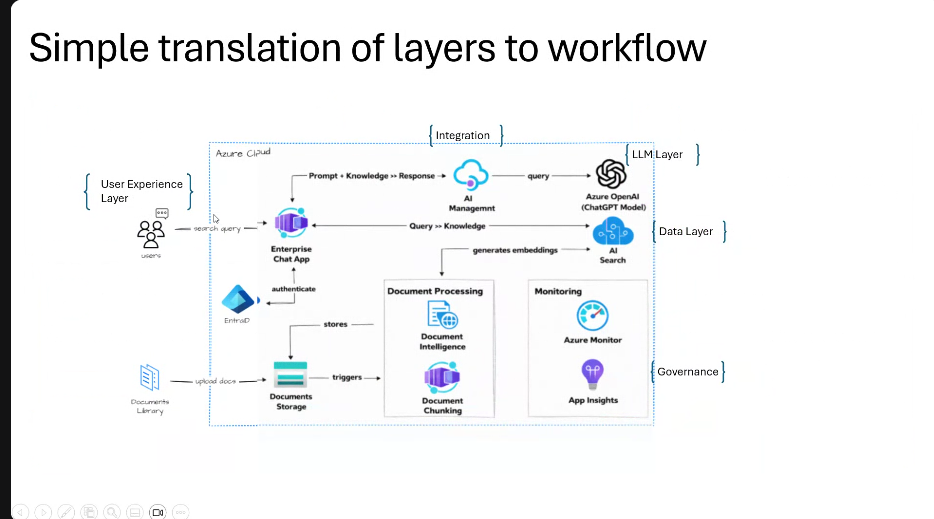

Understanding the Architecture of AI Systems

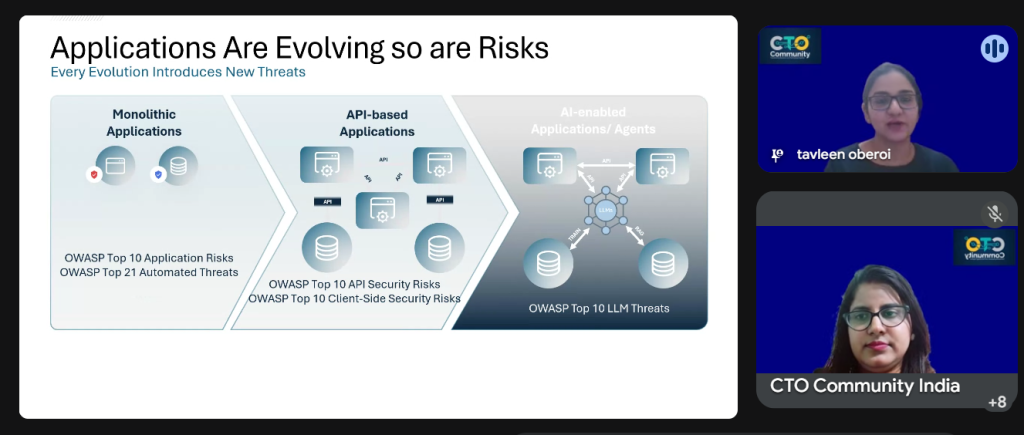

The discussion began with the evolution of application architecture and how modern AI systems are structured in multiple layers.

Two key layers highlighted were:

- LLM Core Layer – the central intelligence responsible for reasoning and generating responses.

- RAG (Retrieval-Augmented Generation) Data Layer – which connects LLMs with enterprise knowledge sources such as vector databases and documents.

While this architecture enables powerful AI applications, it also introduces new security challenges.

OWASP Top 10 LLM Security Risks

The session covered all ten critical vulnerabilities identified by OWASP for LLM-based systems:

• Prompt Injection

• Sensitive Information Disclosure

• Supply Chain Attacks

• Data & Model Poisoning

• Improper Output Handling

• Excessive Agency

• System Prompt Leakage

• Vector & Embedding Weaknesses

• Misinformation / Hallucination

• Unbounded Resource Consumption

These risks highlight how attackers can manipulate prompts, exploit AI integrations, or force systems to reveal confidential information.

AI as a Defensive Tool

One interesting takeaway was that AI itself can strengthen cybersecurity.

AI systems can analyze massive volumes of logs and detect abnormal patterns faster than traditional tools. This enables early detection of threats such as ransomware or intelligent cyber attacks through real-time correlation and anomaly detection.

However, for high-risk scenarios such as financial or legal decisions, the session emphasized the importance of Human-in-the-Loop validation to reduce risks associated with AI hallucinations.

Protecting Proprietary Data from External AI Tools

Organizations must also address the risk of developers unintentionally exposing sensitive data to external AI tools.

A recommended three-layer protection approach includes:

• Developer training and awareness

• Data classification and controlled access rights

• Implementing a gateway or reverse proxy layer to filter prompts before they reach external LLM services

This ensures proprietary information remains protected.

Accountability in AI-Driven Development

Another important discussion focused on responsibility in automated AI deployments.

Security issues caused by AI-generated code should not be attributed to a single developer. Instead, it must be managed through organizational policies and governance frameworks.

Best practices discussed include:

• Testing AI-generated outputs in sandbox environments

• Using segregated credentials and secret vaults

• Conducting Red Team security testing

Challenges in Testing RAG Systems

Testing AI pipelines, especially Retrieval-Augmented Generation (RAG) systems, remains challenging because LLMs are probabilistic. The same prompt may generate slightly different responses each time, making issue reproduction difficult in production environments.

This calls for new testing strategies tailored for AI systems.

The session reinforced an important message for technology leaders:

AI innovation must be accompanied by strong security practices.

Understanding the OWASP Top 10 LLM risks is an essential step toward building secure, trustworthy, and scalable AI systems.

Grateful to everyone in the CTO Community for actively participating in this knowledge-sharing session and contributing to meaningful discussions around responsible AI adoption.